Introduction

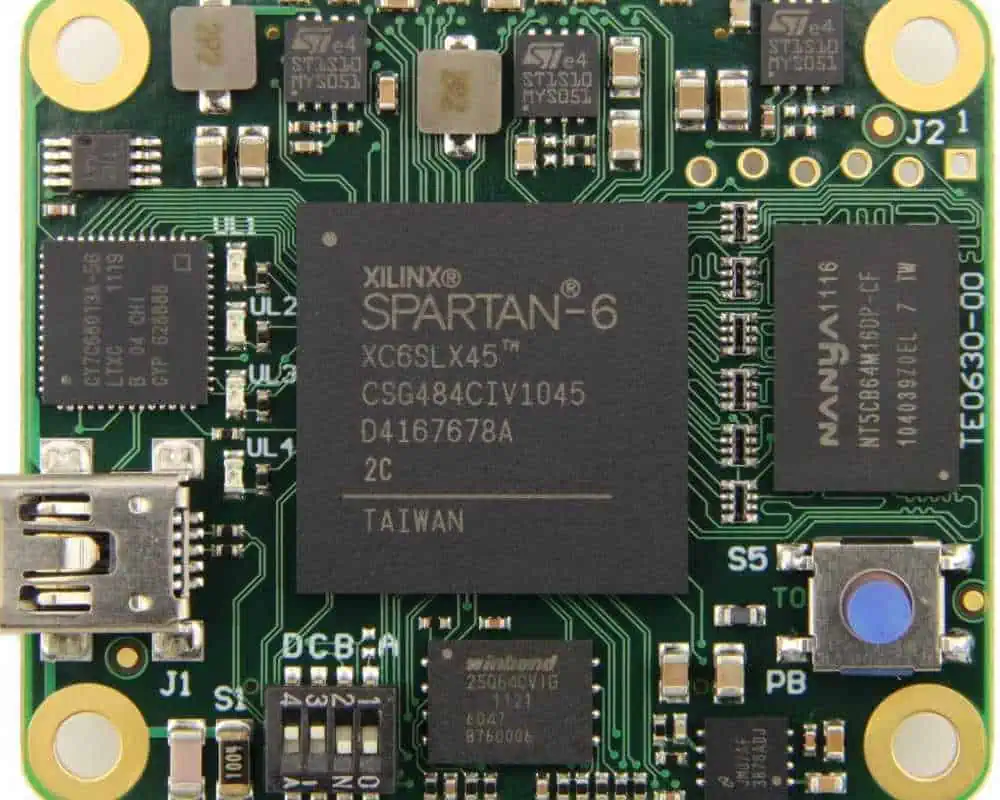

Field Programmable Gate Arrays (FPGAs) represent a unique class of reconfigurable hardware that bridges the gap between software flexibility and hardware performance. Xilinx, now part of AMD, has been a leading provider of FPGA technology, offering solutions that have found applications across numerous domains, including cryptocurrency mining. While Ethereum transitioned from Proof of Work to Proof of Stake in September 2022, the technological principles and implementations of FPGA-based mining acceleration systems remain highly relevant for educational purposes and other cryptocurrency mining applications.

This comprehensive analysis explores the design, implementation, and optimization of Xilinx FPGA-based Ethereum mining acceleration systems, examining the technical challenges, architectural considerations, and performance characteristics that defined this innovative approach to cryptocurrency mining.

Background: Ethereum Mining and the Ethash Algorithm

Ethereum mining, before the transition to Proof of Stake, relied on the Ethash algorithm, a memory-hard proof-of-work function designed to be ASIC-resistant. Unlike Bitcoin’s SHA-256 algorithm, Ethash was specifically engineered to level the playing field between different types of mining hardware by requiring substantial memory bandwidth and capacity.

The Ethash algorithm operates through several key stages:

- DAG Generation: Creates a large directed acyclic graph (DAG) that grows over time, reaching sizes of several gigabytes

- Hash Computation: Performs pseudorandom memory accesses to the DAG while computing hashes

- Nonce Search: Iteratively searches for nonce values that produce hash results meeting the network difficulty target

This memory-intensive nature made Ethash particularly suitable for GPU mining, as graphics cards possessed the necessary memory bandwidth and parallel processing capabilities. However, it also presented unique opportunities for FPGA implementation, leveraging the reconfigurable nature of these devices to create highly optimized mining accelerators.

Xilinx FPGA Architecture and Advantages

Xilinx FPGAs offer several architectural advantages that make them compelling platforms for cryptocurrency mining acceleration:

Reconfigurable Logic Fabric

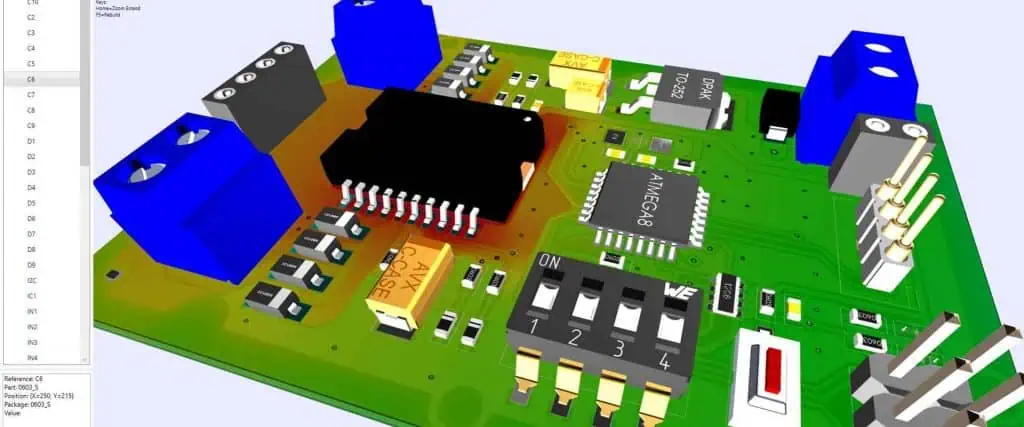

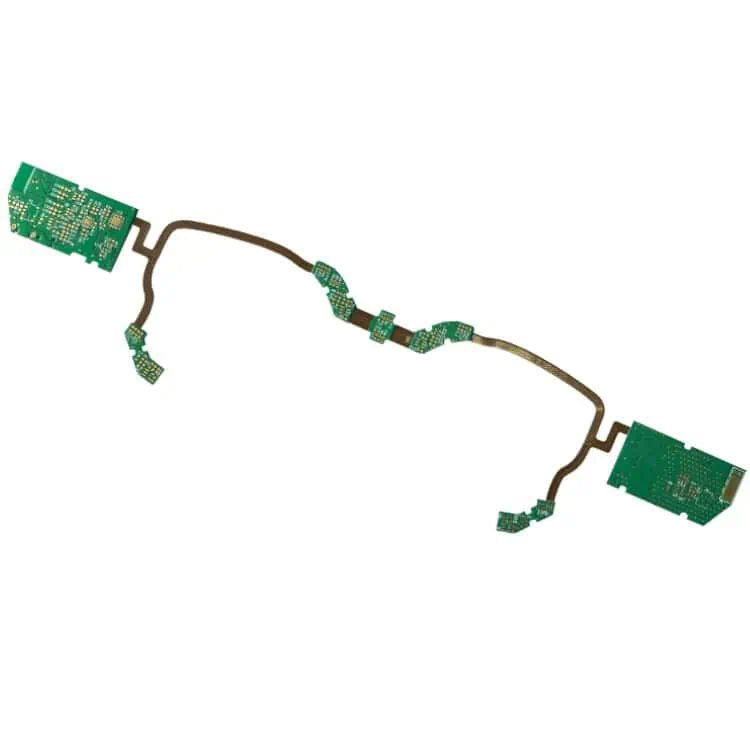

The fundamental strength of Xilinx FPGAs lies in their reconfigurable logic fabric, consisting of configurable logic blocks (CLBs), digital signal processing (DSP) slices, and block RAM (BRAM) resources. This architecture allows for the implementation of custom datapaths optimized specifically for the computational requirements of mining algorithms.

High-Bandwidth Memory Interfaces

Modern Xilinx FPGAs, particularly those in the Ultrascale+ family, support high-bandwidth memory (HBM) and DDR4 interfaces capable of delivering the memory bandwidth required for efficient Ethash computation. The ability to implement custom memory controllers enables optimization of memory access patterns for maximum throughput.

Parallel Processing Capabilities

The inherently parallel nature of FPGA architecture allows for the implementation of multiple independent mining cores on a single device. This parallelism can be exploited at multiple levels, from individual hash function implementations to complete mining pipeline parallelization.

Power Efficiency

When properly optimized, FPGA implementations can achieve superior power efficiency compared to GPU-based mining solutions, particularly important given the energy-intensive nature of cryptocurrency mining operations.

System Architecture Design

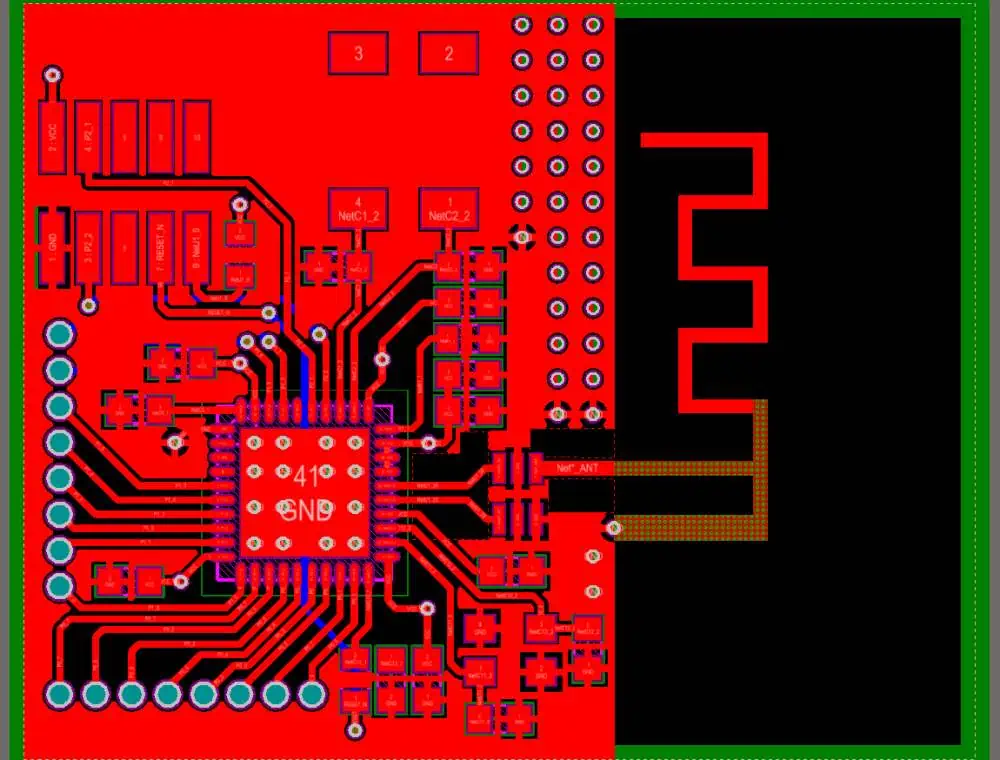

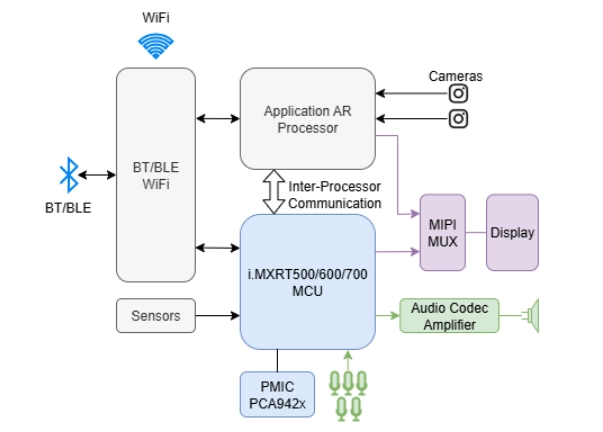

High-Level System Overview

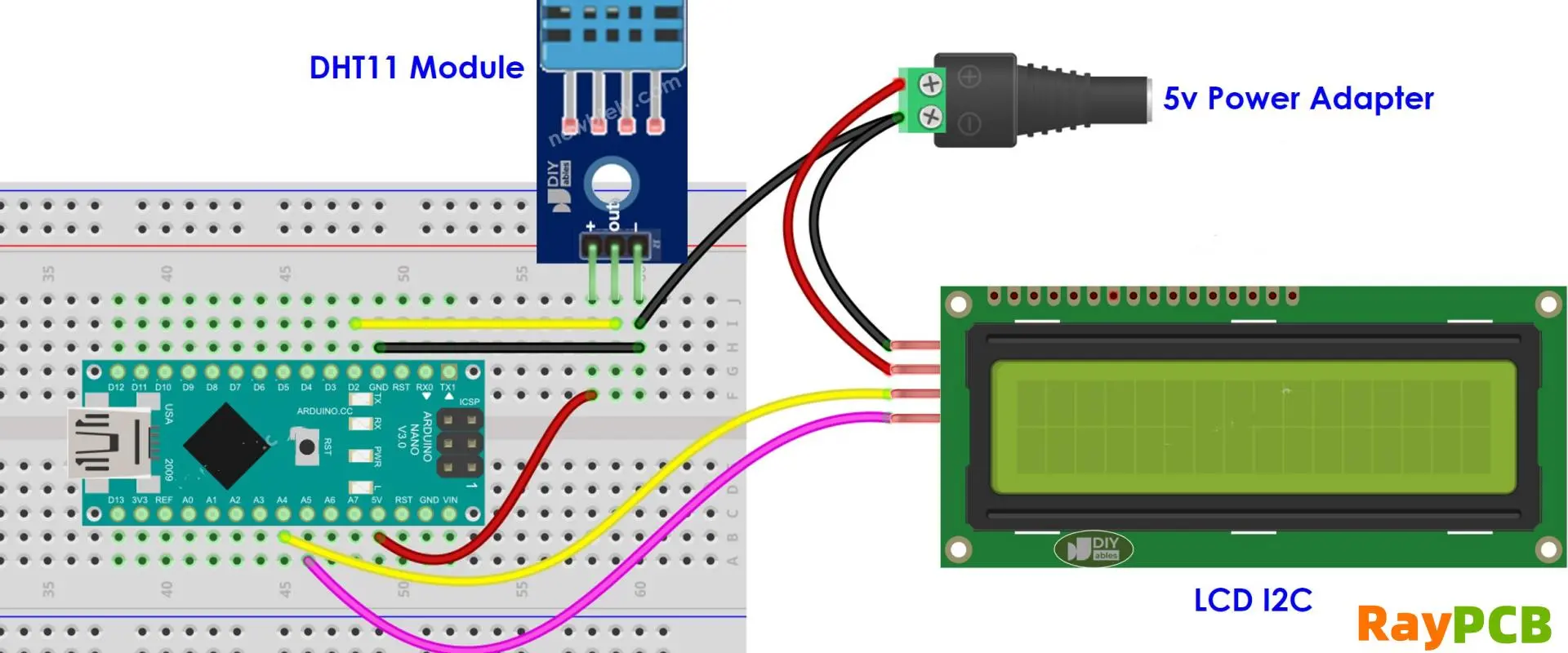

A comprehensive Xilinx FPGA-based Ethereum mining acceleration system consists of several interconnected components:

Host Interface Layer: Manages communication between the FPGA and host system, typically implemented through PCIe interfaces. This layer handles work distribution, result collection, and system configuration.

Work Distribution Engine: Coordinates the distribution of mining work packages across multiple parallel mining cores, ensuring optimal utilization of available computational resources.

Mining Core Array: The heart of the acceleration system, consisting of multiple parallel Ethash computation engines, each capable of independent operation.

Memory Subsystem: Implements high-performance memory controllers and manages the storage and access of the large DAG dataset required for Ethash computation.

Result Processing Pipeline: Handles the verification and formatting of mining results before transmission back to the host system.

Memory Subsystem Design

The memory subsystem represents one of the most critical components of an FPGA-based Ethash mining system. The DAG dataset, which can exceed 4GB in size, must be stored in external memory and accessed with high bandwidth to maintain computational throughput.

Effective memory subsystem design typically employs:

Multi-Port Memory Controllers: Implementation of multiple independent memory controllers to maximize aggregate bandwidth and reduce access conflicts between parallel mining cores.

Intelligent Caching Strategies: Given the pseudorandom nature of DAG accesses in Ethash, sophisticated caching mechanisms can significantly improve effective memory bandwidth utilization.

Memory Access Optimization: Custom memory access scheduling algorithms that account for the specific access patterns of the Ethash algorithm to minimize latency and maximize throughput.

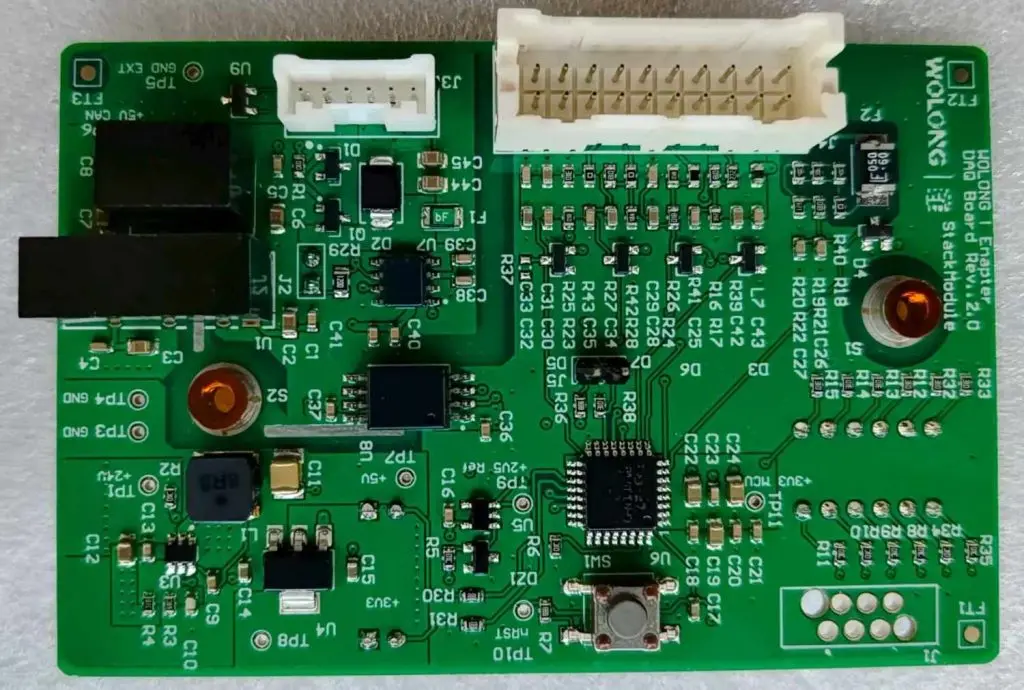

Mining Core Implementation

Each mining core represents a self-contained Ethash computation engine optimized for FPGA implementation. The core design typically includes:

Keccak-256 Hash Units: Highly optimized implementations of the SHA-3 Keccak hash function, often utilizing dedicated DSP resources for maximum performance.

DAG Access Logic: Specialized circuitry for managing the complex memory access patterns required by the Ethash algorithm.

Nonce Management: Efficient nonce generation and tracking mechanisms to ensure comprehensive search space coverage.

Result Validation: On-chip verification of mining results to reduce unnecessary data transfers to the host system.

Performance Optimization Strategies

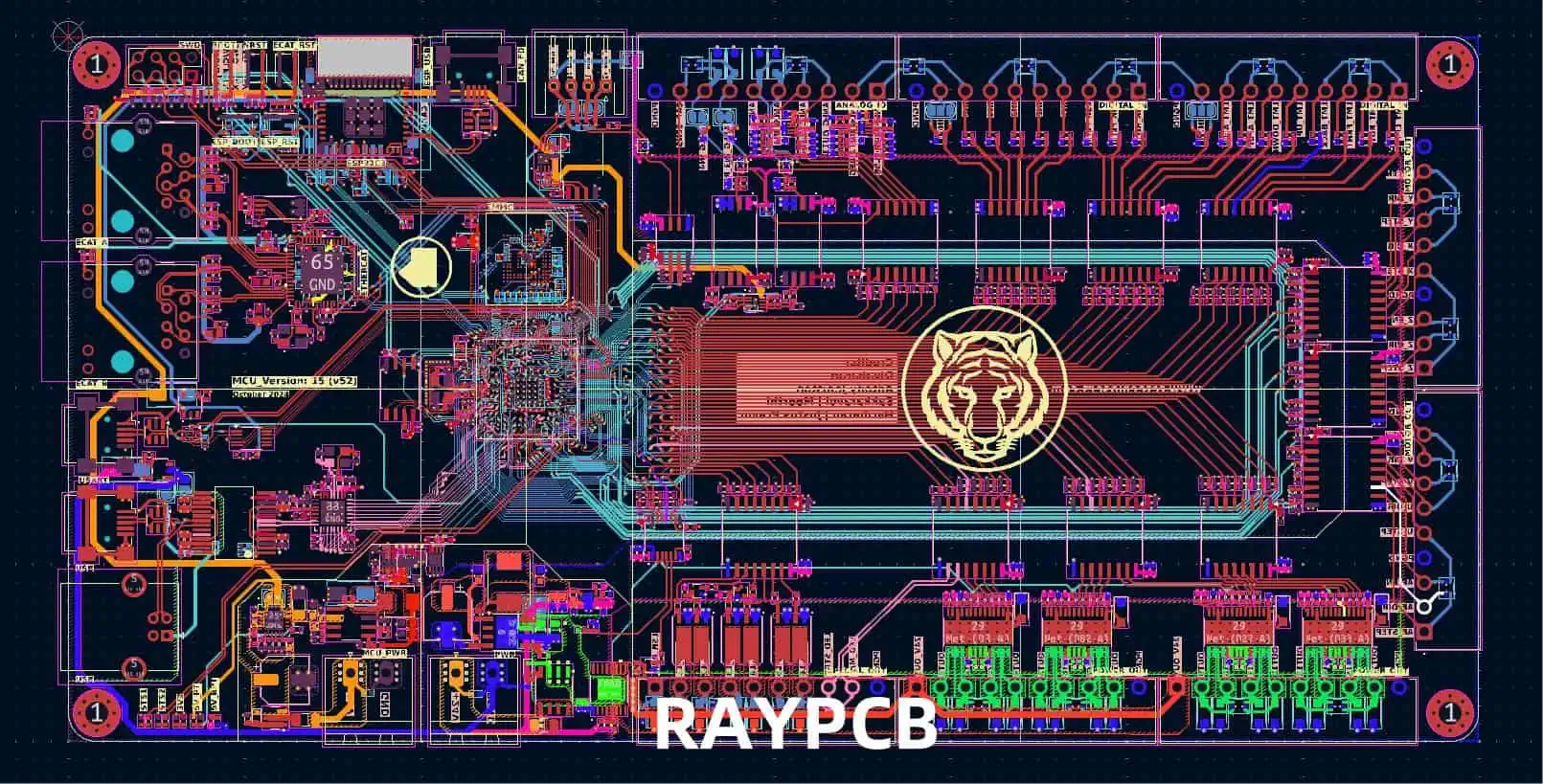

Pipeline Optimization

Effective FPGA mining implementations rely heavily on deep pipeline architectures to maximize throughput. Key optimization strategies include:

Computational Pipeline Balancing: Careful analysis and balancing of pipeline stages to eliminate bottlenecks and ensure maximum clock frequency operation.

Memory Access Pipelining: Implementation of sophisticated memory access pipelines that can sustain multiple concurrent DAG lookups while maintaining data coherency.

Result Processing Overlap: Overlapping result processing operations with ongoing computation to minimize idle time and maximize effective utilization.

Resource Utilization Optimization

Xilinx FPGAs offer diverse computational resources that must be carefully allocated for optimal performance:

DSP Slice Utilization: Strategic use of dedicated DSP slices for performance-critical arithmetic operations within the hash computation pipeline.

BRAM Resource Management: Efficient utilization of on-chip block RAM resources for high-frequency data storage and intermediate result buffering.

Logic Resource Optimization: Careful design to maximize the number of parallel mining cores that can be implemented within the available logic resources.

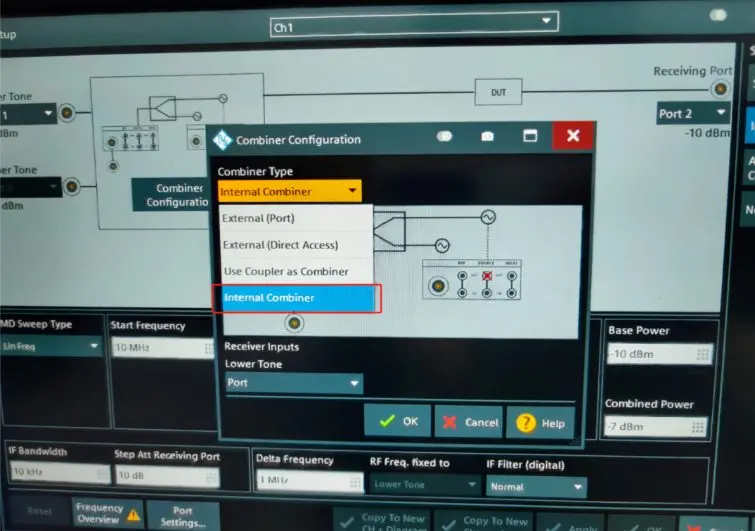

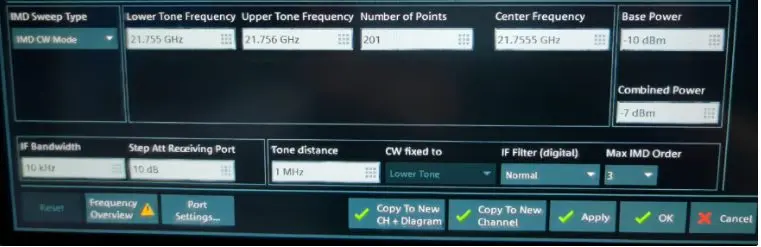

Clock Domain Management

Complex FPGA mining systems often require multiple clock domains to optimize different subsystem operations:

Memory Interface Clocking: Optimization of memory controller clock frequencies to match external memory device specifications and maximize bandwidth.

Computational Core Clocking: Independent optimization of mining core clock frequencies based on critical path analysis and thermal constraints.

Interface Clock Management: Proper management of interface clocks for PCIe and other communication protocols to ensure reliable operation.

Challenges and Implementation Considerations

Thermal Management

FPGA-based mining systems generate significant heat, particularly when operating at maximum performance levels. Effective thermal management strategies include:

Dynamic Voltage and Frequency Scaling: Implementation of adaptive power management techniques that adjust operating parameters based on thermal feedback.

Workload Balancing: Intelligent distribution of computational workload to prevent thermal hotspots and ensure uniform heat distribution across the device.

Cooling System Integration: Design considerations for integration with advanced cooling solutions, including liquid cooling systems for high-performance implementations.

Development Complexity

FPGA-based mining system development presents significant technical challenges:

Algorithm Implementation Complexity: The complexity of implementing optimized Ethash computation engines in hardware description languages requires specialized expertise.

Verification and Validation: Comprehensive testing and validation of complex parallel systems to ensure correctness and reliability under all operating conditions.

Tool Chain Optimization: Effective utilization of Xilinx development tools and optimization flows to achieve optimal implementation results.

Economic Considerations

The viability of FPGA-based mining systems depends on several economic factors:

Development Costs: Significant upfront investment in development time and expertise required to create competitive implementations.

Hardware Costs: FPGA devices, particularly high-end models suitable for mining applications, represent substantial capital investments.

Performance Scalability: The ability to scale performance through parallel device deployment while maintaining economic viability.

Future Implications and Technological Legacy

While Ethereum’s transition to Proof of Stake ended the era of traditional mining on this network, the technological innovations developed for FPGA-based mining systems continue to have broader implications:

Alternative Cryptocurrency Mining

Many other cryptocurrencies continue to utilize proof-of-work consensus mechanisms, creating ongoing opportunities for FPGA-based mining acceleration. The flexible nature of FPGA implementations allows for adaptation to different algorithms with relatively modest development effort.

Computational Acceleration Applications

The optimization techniques and architectural innovations developed for mining applications have found broader applications in high-performance computing, financial modeling, and machine learning acceleration.

Educational and Research Value

FPGA-based mining systems serve as excellent educational platforms for understanding hardware acceleration, parallel computing architectures, and the intersection of computer architecture with economic incentive systems.

Conclusion

Xilinx FPGA-based Ethereum mining acceleration systems represented a sophisticated intersection of reconfigurable computing technology and cryptocurrency economics. These systems demonstrated the potential for FPGA technology to deliver high-performance, power-efficient solutions for computationally intensive applications while highlighting the complex design challenges inherent in developing such systems.

The technical innovations developed during this period continue to influence modern approaches to hardware acceleration and demonstrate the ongoing relevance of FPGA technology in addressing emerging computational challenges. As the cryptocurrency landscape continues to evolve, the fundamental principles and optimization strategies developed for these systems remain valuable for understanding the broader potential of reconfigurable computing in high-performance applications.

The legacy of FPGA-based mining systems extends beyond their original application, contributing to the broader understanding of hardware acceleration, parallel processing architectures, and the economic factors that drive technological innovation in emerging computing domains.