18650 batteries are cylindrical lithium-ion batteries that are commonly used in high drain devices like laptops, electric vehicles, and high-powered flashlights. At one end of the 18650 battery is the positive terminal, and at the other end is the negative terminal. In between the terminals is the PCB or protection circuit board.

The PCB serves some important functions:

- Prevent over-charging and over-discharging of the battery

- Protect against short circuits

- Control current flow in and out of the battery

- Provide a connection point for the terminals

Understanding what the PCB is and how it works is useful for anyone using 18650 batteries. This article will provide a detailed overview of the PCB on 18650 batteries.

What is a PCB?

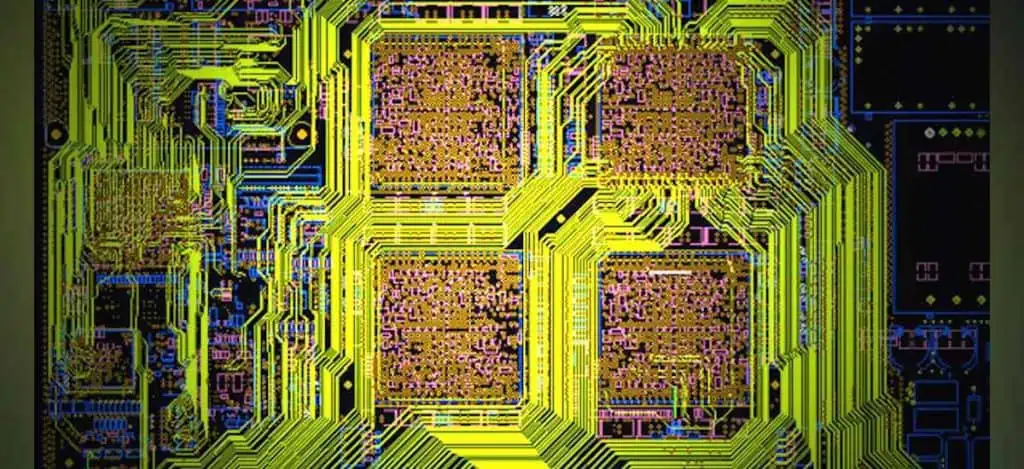

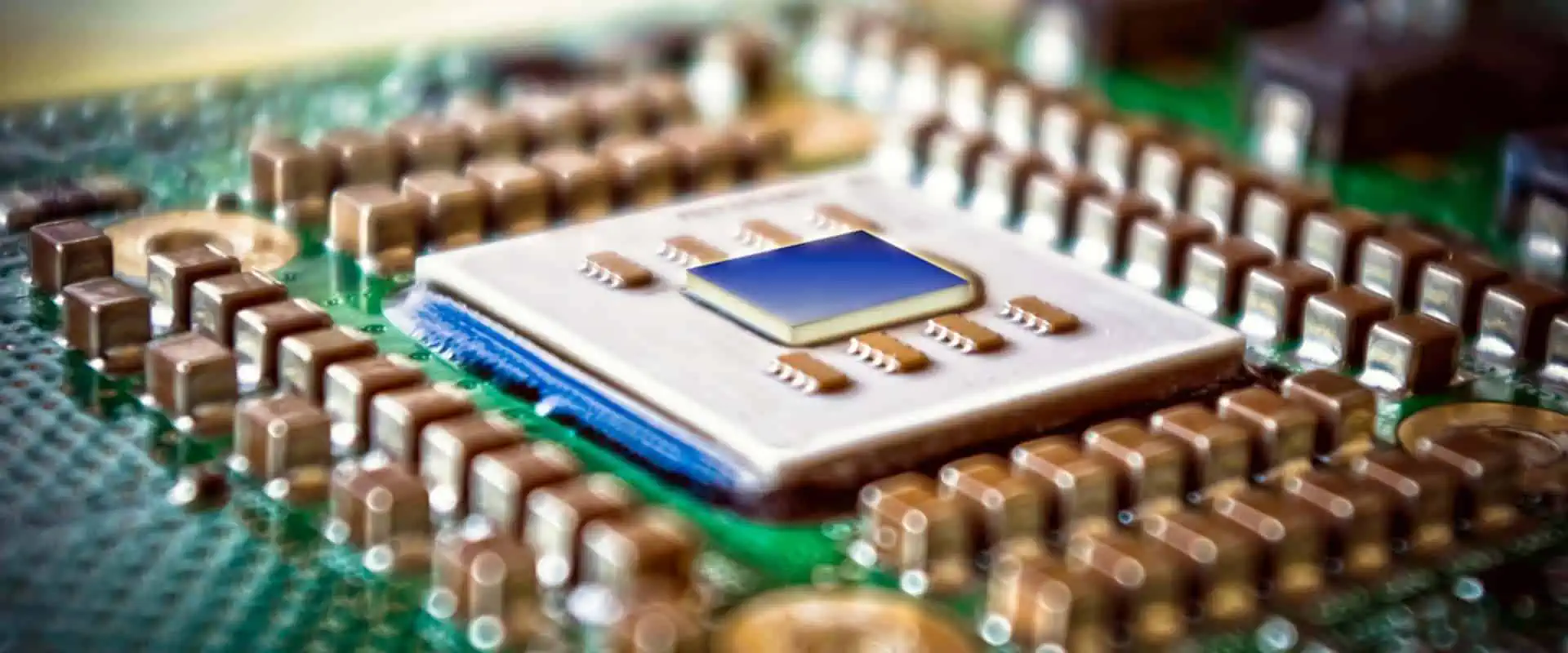

PCB stands for Printed Circuit Board. A PCB is a thin board made of fiberglass or other composite materials that has copper tracings etched into it. Electronic components such as resistors, capacitors, and integrated circuits are mounted and soldered onto the PCB to create an electronic circuit.

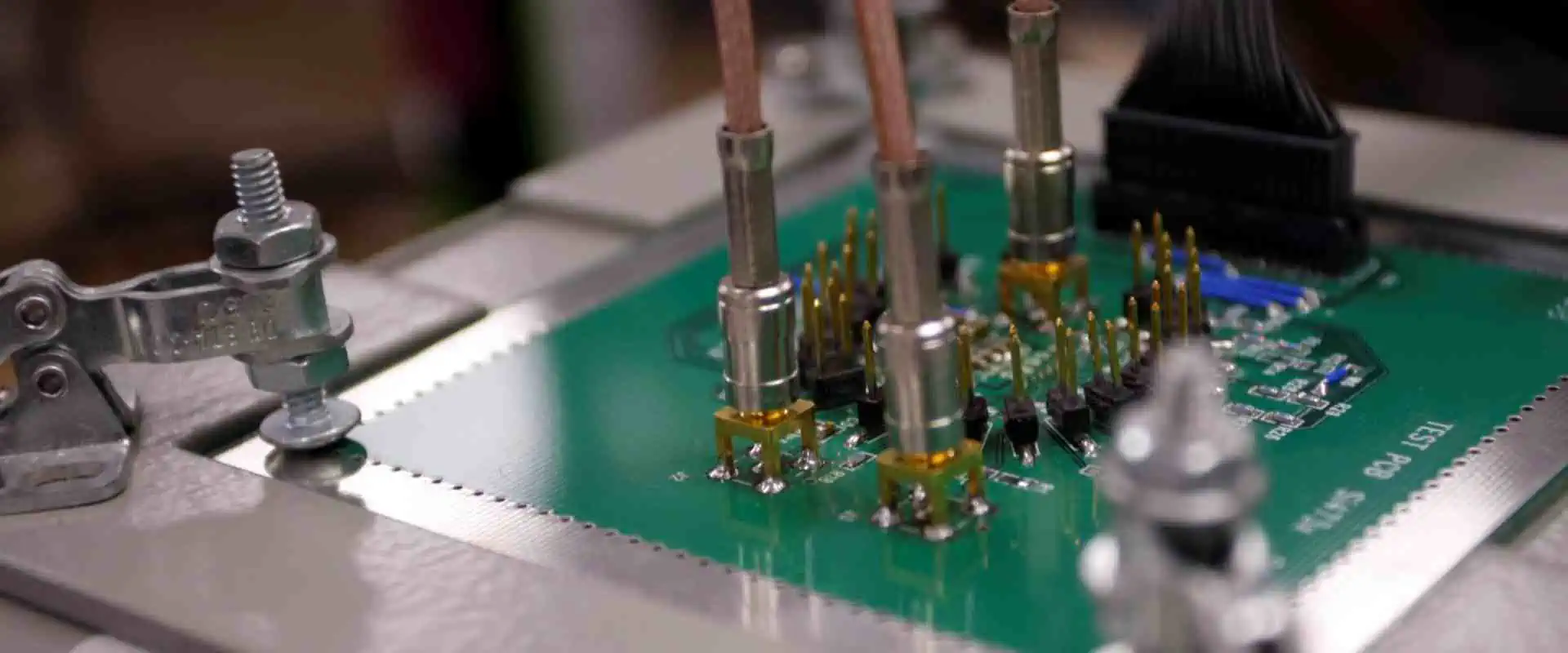

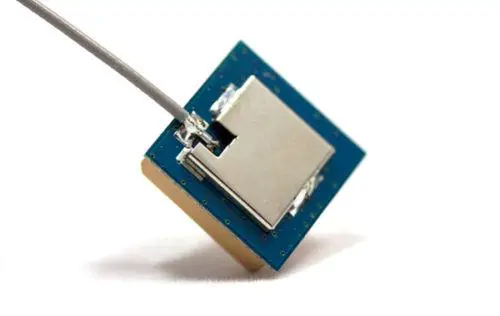

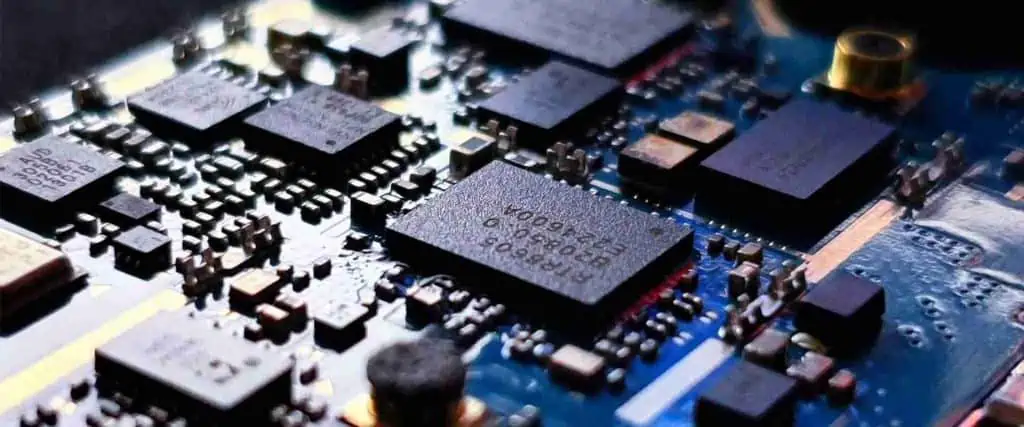

A typical PCB with electronic components mounted on it

PCBs provide a sturdy base for mounting delicate electronic components and copper tracings to route electrical signals between components. PCBs are invaluable in all electronic devices, enabling compact and reliable construction.

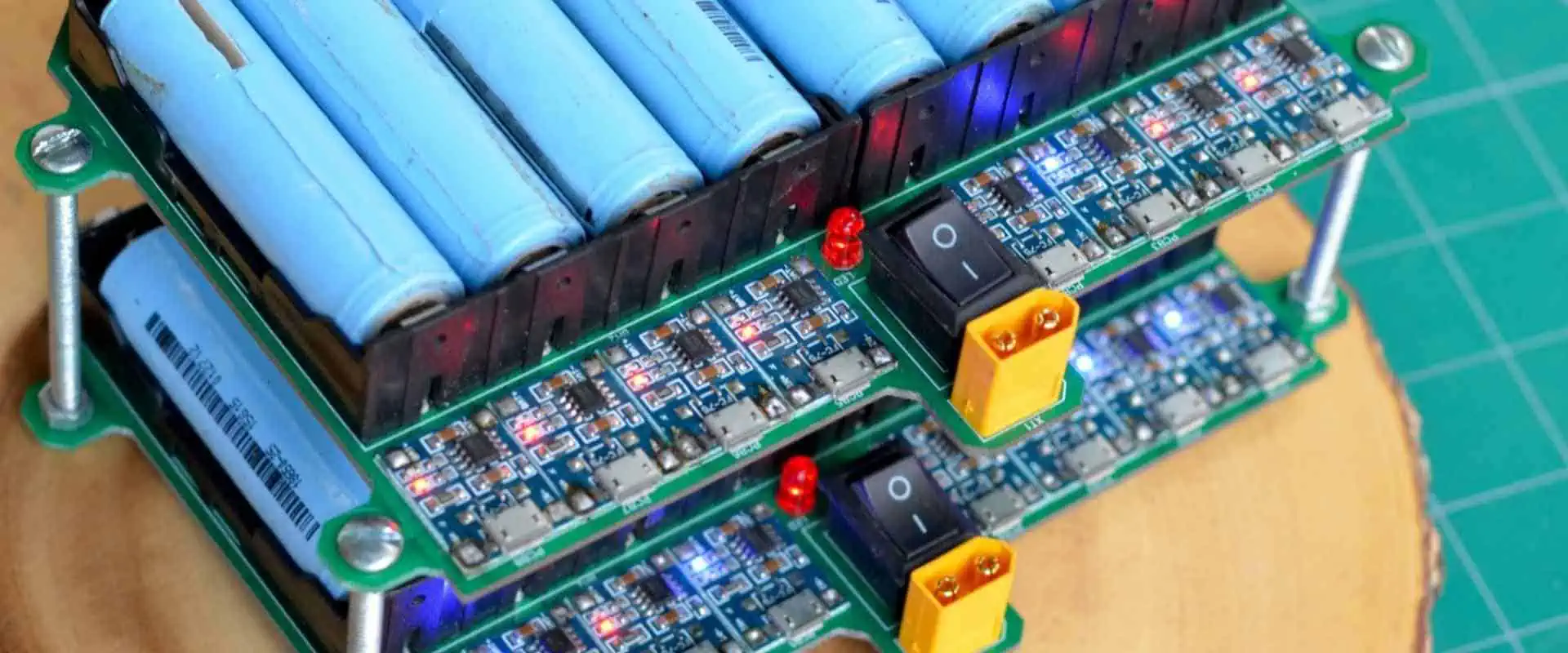

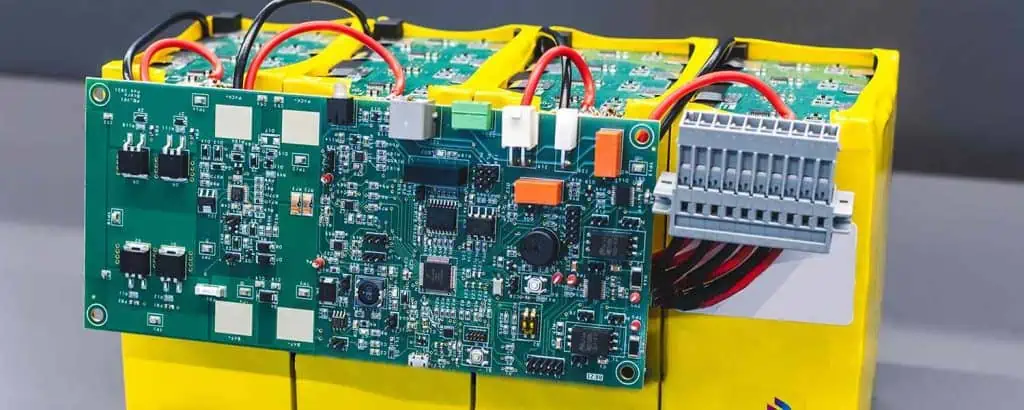

PCB on 18650 Battery

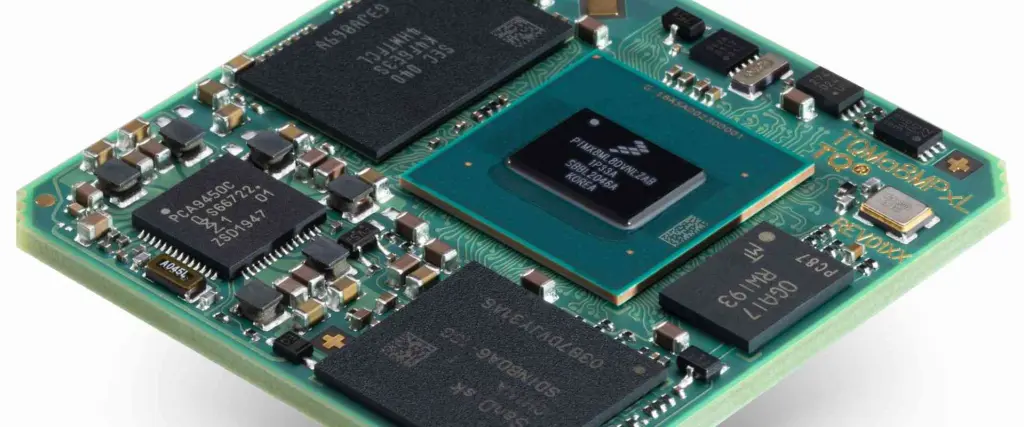

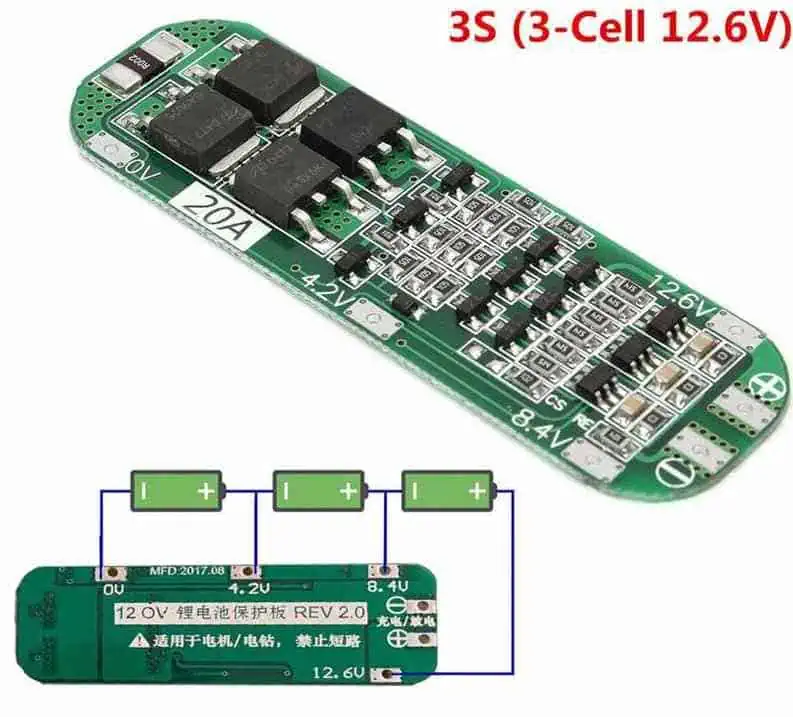

18650 battery with protection circuit module attached

The PCB on an 18650 battery, also called a protection circuit module (PCM), serves the same purpose as a PCB in other electronic devices – it routes electrical connections and mounts electronic components. However, the PCM in an 18650 battery has some additional important functions:

Overcharge Protection

When charging, lithium-ion batteries must not be charged beyond their safe voltage range, which is typically 4.2V for most 18650 cells. The PCM contains an overvoltage protection circuit that monitors the battery voltage and disconnects the battery from the charger if the voltage exceeds the safe limit. This prevents the battery from being damaged by overcharging.

Overdischarge Protection

When discharging, lithium-ion batteries must not be drained below a certain voltage threshold, around 2.5-3V for most 18650 cells. The PCM contains an undervoltage protection circuit that monitors the battery voltage and disconnects the load if the voltage drops too low. This prevents the battery from being damaged by over-discharging.

Short Circuit Protection

Short circuits can draw huge currents and overheat batteries. The PCM contains current limiting circuitry such as fuses and PTC thermistors that cut off the current if a short circuit is detected. This protects the battery from excessive current.

Cell Balancing

In multi-cell battery packs, the cells can become imbalanced over time – one cell can become overcharged/overdischarged compared to the other cells. The PCM contains cell balancing circuits that keep the cells at equal voltage and charge levels for optimal performance and life.

Terminals

The positive and negative battery terminals are connected to the PCM, which routes the current in and out of the battery. So the PCM provides easily accessible battery terminals for devices to connect to.

Protection Circuit Components

The protection circuits on the PCM are created with various electronic components that perform specific functions:

MOSFETs

MOSFET symbol

MOSFETs (Metal-Oxide Semiconductor Field Effect Transistors) are used as electronic switches to turn current flow on and off. They act as control valves that open and close based on voltages applied to their pins. In the PCM, MOSFETs turn off the connection to the battery when protection circuits detect abnormal voltages or currents.

Resistors

Resistor symbol

Resistors limit and control the amount of current flowing in a circuit. They are used extensively in protection circuits to shape response times and sensitivity to voltage/current levels. Typical resistor values in PCMs range from 1 ohm to 1 megaohm.

Capacitors

Capacitor symbol

Capacitors are energy storage devices used to smooth out voltage spikes and ripples. They help filter noise from measurements and control response rate of protection circuits. PCMs use ceramic, tantalum and electrolytic capacitors in the ranges of nano-Farads to milli-Farads.

IC Chips

IC chip symbol

Integrated chips contain complex protection circuitry in a tiny footprint. Protection ICs monitor voltage, current, temperature and control charging/discharging parameters. They may also balance cell voltages in battery packs. Common ICs in PCMs include bq20z45, DW01, S-8261 series.

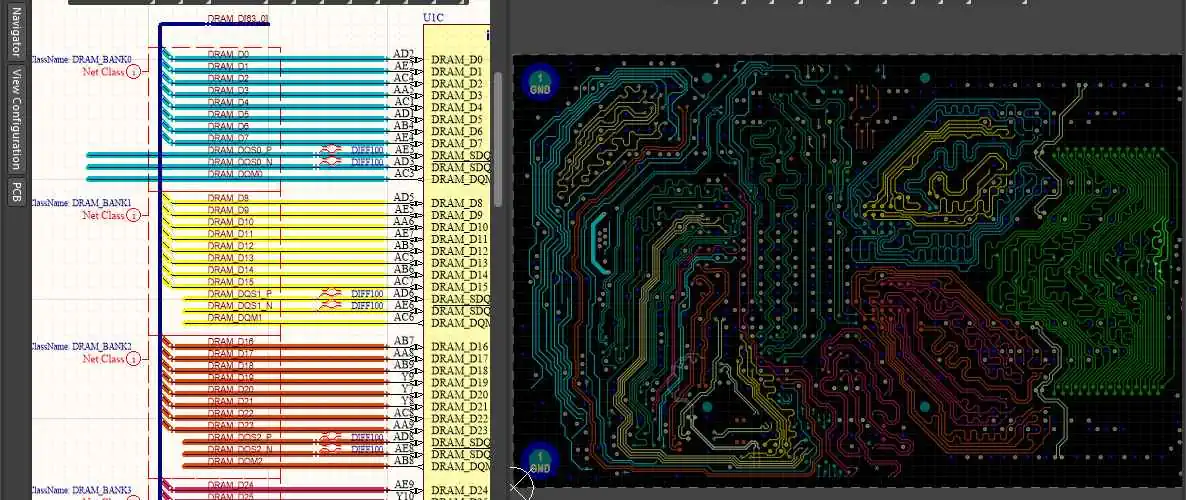

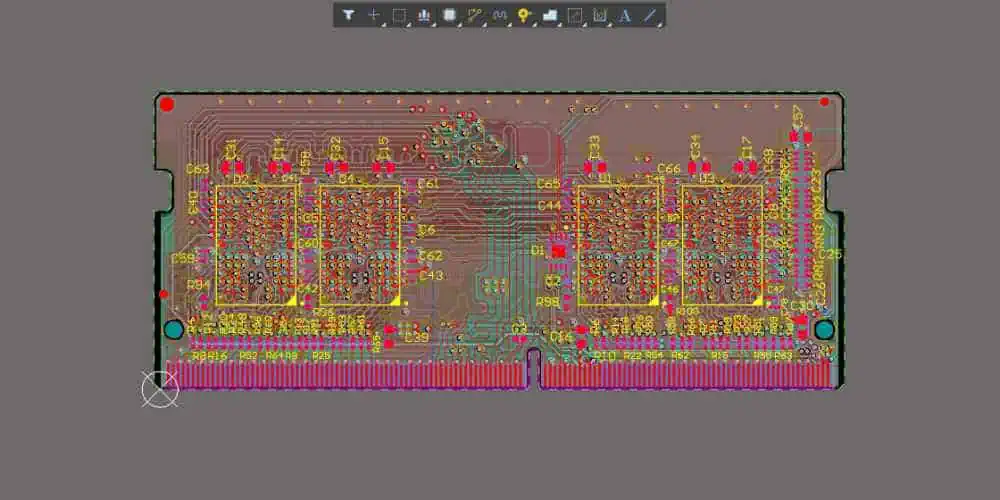

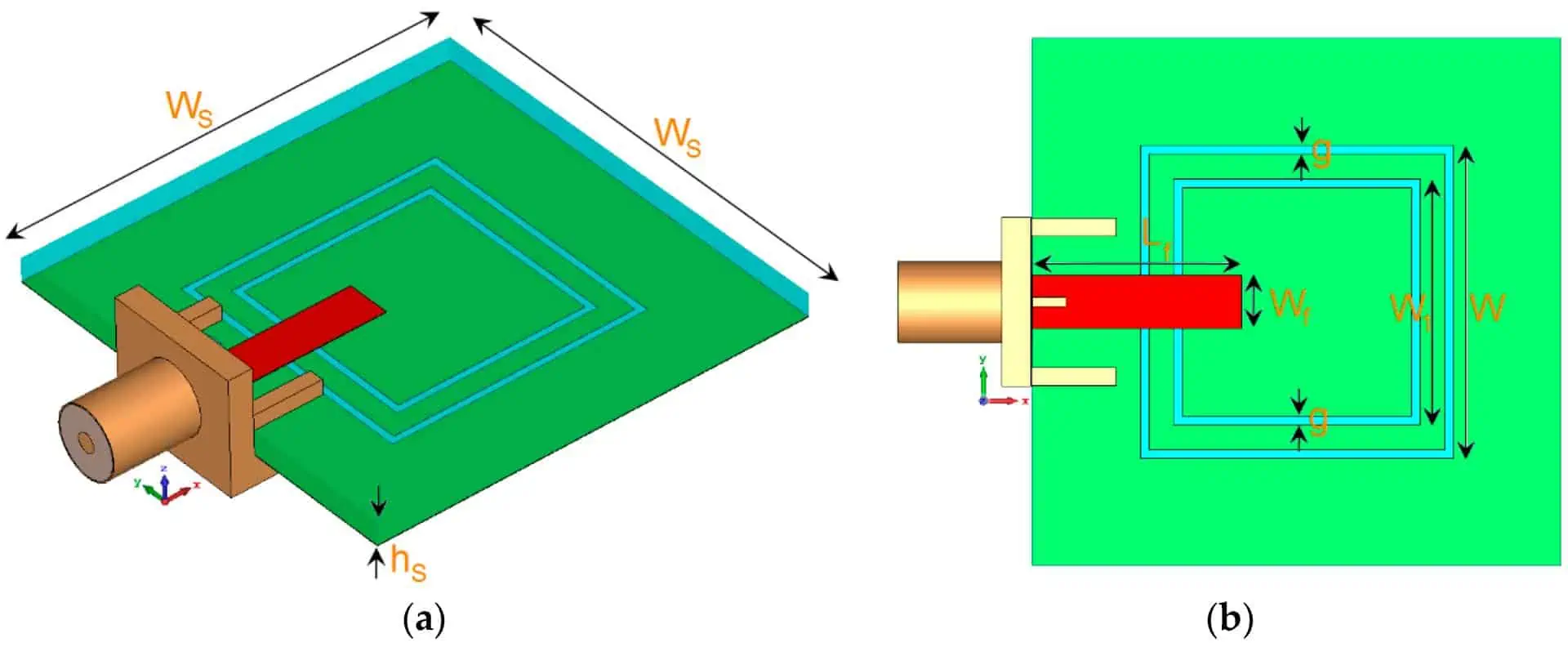

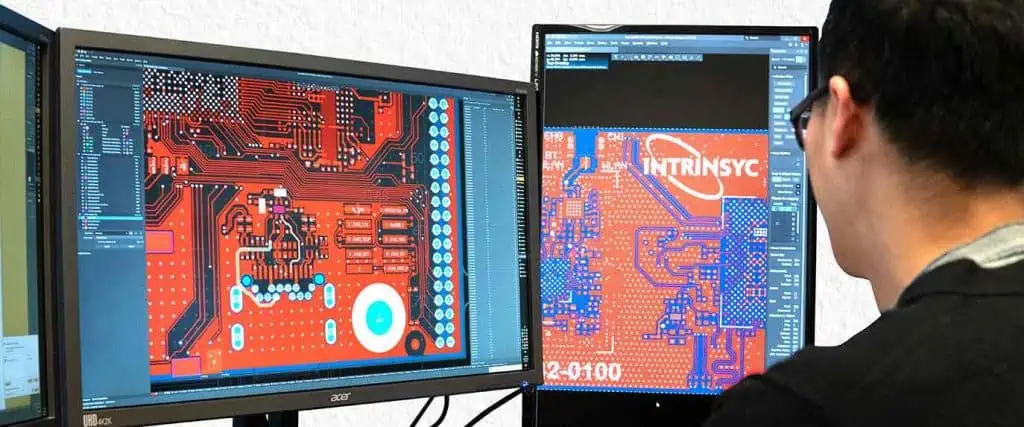

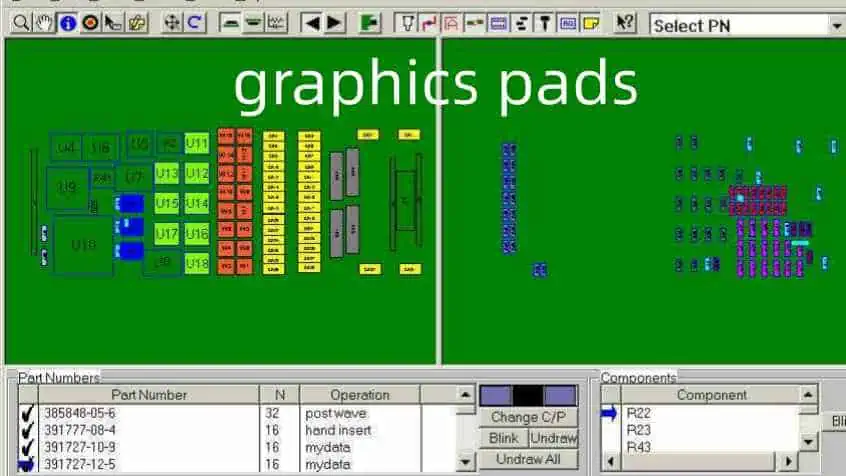

PCB Design and Construction

The PCB for the protection circuit is designed using CAD software that enables routing of copper traces to connect components as per the circuit diagram. The design is etched onto the PCB which is usually a double-sided or multi-layer board with copper on both sides for complex circuitry.

Components are precisely mounted onto the PCB using modern pick and place machines, then soldered into place. PCMs require compact designs to fit in the confined space of an 18650 battery casing. So surface mount devices are used instead of through-hole components.

The PCB fabrication uses automated processes for etching copper layers, drilling holes, applying solder mask and screen printing labels to create professional looking and functional boards. The populated PCBs then undergo functional testing before being paired with the cylindrical cell cores.

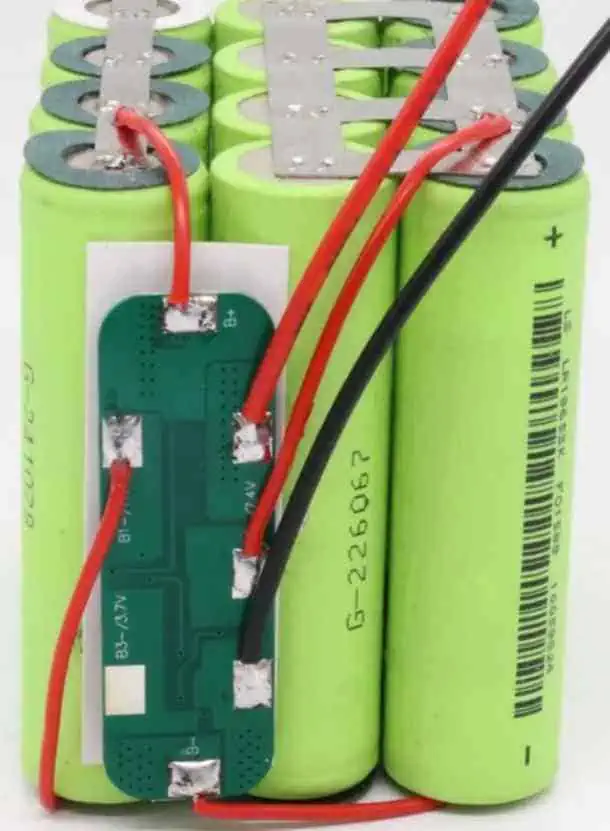

Here is a comparison of a bare 18650 cell core versus one with a PCM installed:

| 18650 Cell | 18650 Cell with PCM |

|---|---|

The PCM gets fitted onto the cell core and makes electrical contact with the terminals. The whole assembly is then shrink wrapped with insulating material to create the finished 18650 cell.

Advantages of PCM Protection

Installing a protection circuit module onto 18650 cells has several benefits:

- Prevents cell damage from over-charging or over-discharging

- Protects against short circuits that can cause fires

- Balances cells in multi-cell packs for optimal performance

- Safety certified cells reassure end-users

- Enables use of inexpensive core cells without native protection

- Gives clear battery voltage indicators via protection IC communications

This allows lower cost unprotected cell cores to be transformed into safer and smarter batteries for diverse applications. The minor added cost of the PCM results in greatly improved battery performance, safety and life.

Unprotected cells should never be used standalone in devices or battery packs. The investment into a protection circuit is worthwhile for the immense dividends in battery longevity and risk reduction.

Protection Circuit Limitations

While PCMs provide invaluable protection, they do have some limitations:

- The protection circuits consume a small amount of standby current which gradually drains the battery. The battery will still slowly self-discharge even when turned off.

- Voltage and current thresholds have some tolerance so there is a gap between detection and complete disconnect. Some marginal over-charging or over-discharging may occur.

- The MOSFET switches and fuses have their own resistance which causes some power loss. This increases with higher currents.

- High currents from short circuits can sometimes exceed the speed of protection circuit response. Brief high currents may flow before being cut off.

- Excessive ambient temperatures can prevent circuits from operating properly. Thermal overload can lead to failure.

So PCM protection should not be considered foolproof. Batteries should not be discharged below recommended cutoffs or charged beyond maximum levels. Short circuits should still be avoided. And proper heat dissipation is still necessary in battery pack design. The PCM just provides a crucial additional layer of safety.

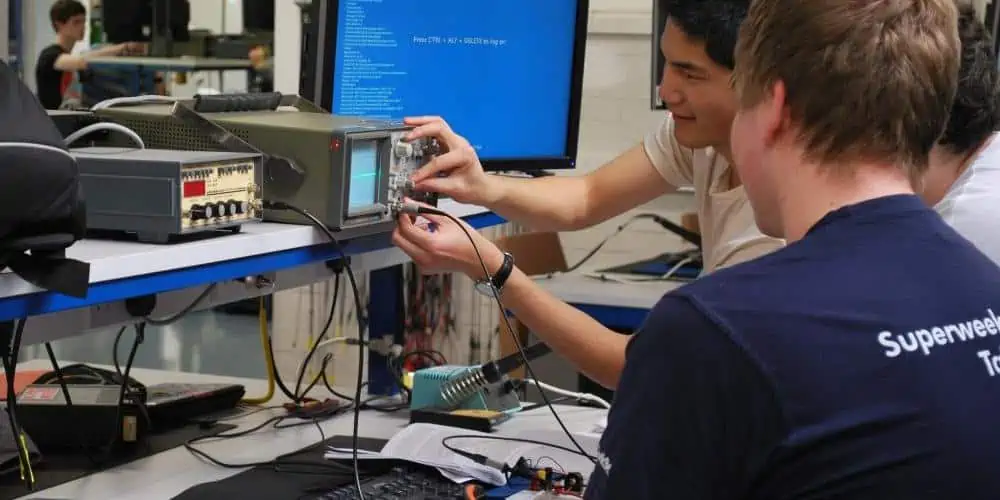

Protection Circuit Testing

Since the PCM is so vital for battery safety, all reputable battery manufacturers perform extensive testing to ensure the protection circuits work as intended. Here are some common tests conducted:

Overcharge Protection Verification

Batteries are charged to progressively higher voltages to ensure overcharge protection activates within the specified range and prevents further charging. This confirms the upper cutoff voltage limit.

Overdischarge Protection Verification

Batteries are discharged through fixed loads down to progressively lower voltages to ensure overdischarge protection activates within the specified range. This confirms the lower cutoff voltage limit.

Short Circuit Test

A dead short is applied across the battery terminals and the resulting peak current and disconnect time are measured. This verifies the PCM can withstand and protect from short circuits.

Charge/Discharge Cycling

Batteries undergo repeated charge/discharge cycles while monitoring voltages, currents and temperatures. This evaluates protection performance over time as the battery ages.

Drop/Shock/Vibration

Physical drop tests, shock tests and vibration tests are performed to ensure PCM integrity is maintained after impacts or shaking.

Extensive testing over diverse operating conditions ensures the PCM provides robust protection throughout the battery’s service life. Independent lab certification is the gold standard for safety-critical lithium-ion batteries.

Common Protection ICs

There are a variety of integrated circuits designed specifically for lithium battery protection. Here are some of the most popular ICs used on 18650 PCMs:

Seiko S-8261 Series

The S-8261A, S-8261AB, and S-8261BA are widely used single cell lithium battery protection ICs from Seiko. They provide overcharge, overdischarge and overcurrent protection. Newer versions add cell balancing and temperature protection.

Texas Instruments BQ20z45 Series

The bq20z45 and bq2046 ICs integrate battery management and protection in a single chip. They allow communication with a host controller over an I2C interface. This enables fuel gauging and optimization of charging/discharging parameters.

Analog Devices ADP5052/5053

The ADP5052 and ADP5053 offer low power consumption single cell protection. They have undervoltage, overvoltage, and current limit protection. Thermal regulation protects during high temperature fault conditions.

Mini-Circuits ZXCT1009F

The ZXCT1009 provides simple and compact overvoltage and undervoltage protection in a tiny SC70-6 package. It consumes just 1.2μA supply current making it suitable for low power applications.

DW01-P/CF/DF

The DW01 range of lithium battery protection ICs from DOWN Stream Technologies offer full protection features and safe battery state monitoring through a simple UART interface.

Conclusion

In summary, the PCB or protection circuit module on an 18650 lithium-ion battery plays a crucial role in:

- Preventing damage from over-charge/over-discharge

- Protecting against short circuits

- Monitoring cell state

- Providing easy battery connections

It allows unprotected cell cores to be turned into smart, safe batteries. The protection electronics guard against the risks of lithium-ion batteries whilst allowing them to provide high energy density storage.

Carefully designed PCMs with quality components from reputable brands help ensure the protection reliability needed in lithium battery packs. Extensive testing and safety certification provides confidence in PCM performance over thousands of charge cycles.

So next time you use an 18650 lithium-ion battery, appreciate the importance of that little PCB inside to its safe and effective operation. The PCM makes high power portable applications viable and provides peace of mind to users.

FAQs

What are the key functions of the PCM?

The main functions of the PCM are:

- Overcharge protection to prevent over-charging above 4.2V

- Overdischarge protection to prevent over-discharging below 2.5-3V

- Short circuit protection via fuses and current monitoring

- Cell balancing in multi-cell packs

- Providing connection points for battery terminals

Does every 18650 battery have a PCB?

The vast majority of 18650 cells will have a PCB installed, but there are some “raw” unprotected cells without PCMs. These should not be used without an external PCM for safety. Protected cells with onboard PCMs are recommended.

Where is the PCM located on an 18650?

The PCM is located on the bottom end of an 18650 cell, connected between the positive and negative terminals. The protection IC and other components are on the flat surface of the PCM board.

Can an 18650 work without a PCM?

Bare 18650 cell cores will function without a PCM but have risks. There is potential for over-charge, over-discharge, short circuits and cell imbalance in packs. For reliable and safe operation, a PCM is highly recommended.

Is the PCM replaceable if damaged?

Generally no, the PCM on most 18650 cells is factory fitted and not designed to be replaced. If the PCM is damaged, the entire cell/pack should be replaced for safety. Trying to replace the PCM carries risks of short circuits.

Does a PCM reduce the battery capacity?

The PCM does use a tiny amount of power itself, so it causes a minor reduction in usable capacity. But this is small (under 5%) compared to the huge benefits in battery protection and enhanced safety it provides.