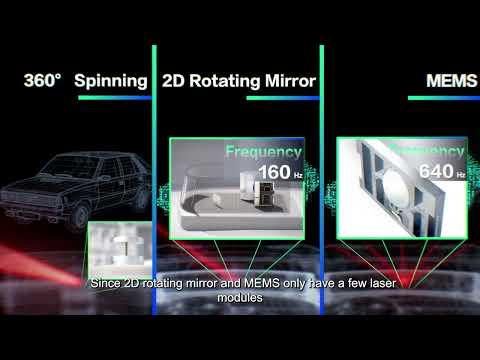

The automotive industry stands at a technological crossroads as manufacturers grapple with choosing between two distinct LiDAR approaches for autonomous driving systems. Recent vehicle launches highlight this divide: the Yangwang U8 features RoboSense’s M1P long-range LiDAR for lateral sensing, while the AITO M series employs TOF (Time-of-Flight) solid-state LiDAR for short-range lateral detection. This fundamental choice will shape the future of autonomous vehicle perception systems.

Understanding the Technology Divide

Despite both technologies being classified as “LiDAR” or “optical ranging,” they represent fundamentally different approaches to environmental perception. Long-range MEMS/solid-state LiDAR systems like RoboSense’s M1P belong to the high-performance category, delivering detailed point clouds over distances exceeding 200 meters. These systems excel at creating comprehensive 3D environmental models essential for high-speed autonomous driving scenarios.

Conversely, TOF technology operates on a simpler principle, measuring the time difference between emitted and reflected light pulses to calculate distances. While TOF sensors can be highly miniaturized and cost-effective, they typically serve short-range applications such as blind-spot monitoring, parking assistance, and low-speed collision avoidance. The key distinction lies not just in range, but in the depth and quality of environmental understanding each technology provides.

Performance Characteristics and Applications

Detection Range and Scenario Optimization

Long-range solid-state LiDAR systems demonstrate superior capabilities in demanding driving conditions. With detection ranges of 150-200 meters and point generation rates exceeding one million points per second, these systems provide the early warning necessary for high-speed autonomous navigation. When traveling at highway speeds, the ability to detect small objects—whether distant vehicles, pedestrians, or road debris—several seconds before potential impact becomes critical for system response time.

TOF sensors, while limited to ranges typically under 50 meters, excel in scenarios where rapid response and cost efficiency are paramount. Lane-change assistance, parking maneuvers, and blind-spot monitoring represent ideal applications for TOF technology, where ultra-low latency and compact form factors outweigh the need for long-range detection.

Point Cloud Quality and 3D Perception

The quality of environmental data represents perhaps the most significant differentiator between these technologies. Traditional high-performance LiDAR systems generate dense, three-dimensional point clouds that enable sophisticated object detection, classification, and geometric modeling. This capability proves invaluable in complex scenarios such as multi-object tracking at busy intersections, precise boundary recognition on narrow roads, or reliable obstacle detection in low-light conditions.

TOF systems typically produce 2D depth maps or sparse distance measurements rather than comprehensive 3D point clouds. While sufficient for basic proximity detection and simple geometric calculations, this limitation constrains their effectiveness in complex, far-field reasoning scenarios that autonomous vehicles regularly encounter.

Environmental Resilience and Reliability

Both technologies face challenges in adverse weather conditions, though their vulnerabilities manifest differently. Optical-based LiDAR systems can suffer from signal attenuation and false readings caused by rain, snow, or fog. However, advanced implementations incorporate dual-echo processing, extended wavelength ranges, and sophisticated signal filtering to mitigate these effects.

TOF sensors, while also susceptible to weather-related interference, benefit from their short-range focus, allowing for more targeted filtering algorithms. However, neither technology alone provides complete weather immunity, emphasizing the critical importance of multi-sensor fusion strategies that combine optical sensors with radar and camera systems.

Economic and Integration Considerations

Manufacturing and Cost Dynamics

TOF modules present compelling advantages in terms of manufacturing cost, physical size, and integration complexity. These sensors can be seamlessly embedded into vehicle designs without significant aesthetic or structural modifications, making them attractive for cost-conscious manufacturers and mainstream vehicle segments.

High-performance long-range LiDAR systems demand more sophisticated engineering, encompassing precision optics, advanced electronics, ruggedized packaging, and comprehensive safety certifications including AEC-Q100 and ISO/ASIL standards. These requirements traditionally resulted in higher costs, though economies of scale and technological maturation are driving prices downward. RoboSense’s M1 series exemplifies this trend, achieving mass production viability that makes high-performance LiDAR accessible to broader market segments.

System Architecture Philosophy

Automotive manufacturers are adopting divergent approaches based on their target market segments and technological philosophies. Premium manufacturers often center their systems around high-performance, long-range LiDAR mounted on vehicle roofs or integrated into front fascias, treating these sensors as primary perception tools supplemented by cameras and radar for redundancy and semantic understanding.

Cost-focused manufacturers prefer architectures built around camera-centric systems enhanced by strategically placed TOF sensors for specific proximity tasks. This approach leverages advanced computer vision algorithms and cloud-based data processing to achieve acceptable performance levels at significantly reduced hardware costs.

Market Segmentation and Future Trajectories

Luxury vs. Mass Market Divide

The automotive market will likely stratify along performance and price lines. Luxury and high-performance vehicles targeting Level 3+ autonomy will continue investing in long-range LiDAR systems like the M1P, prioritizing system robustness and comprehensive environmental understanding over cost considerations. These applications demand the reliability and performance that only high-end LiDAR can currently provide.

Mass-market vehicles will gravitate toward hybrid architectures combining cost-effective TOF sensors with advanced camera systems and strategic radar placement. This approach can deliver satisfactory Level 2+ functionality while maintaining price competitiveness essential for broad market adoption.

Technology Convergence and Evolution

The boundaries between these technologies continue to blur as both segments advance. Long-range LiDAR costs are declining through manufacturing scale and technological improvements, while TOF and other solid-state solutions including Optical Phased Arrays (OPA), Flash LiDAR, and Frequency Modulated Continuous Wave (FMCW) systems are extending their range and resolution capabilities.

However, fundamental physics limitations suggest that distinct functional roles will persist, with each technology optimized for specific sensing requirements rather than direct competition across all applications.

Strategic Recommendations

For Automotive Manufacturers

Vehicle manufacturers targeting Level 3+ highway autonomy should prioritize high-performance LiDAR integration for primary long-range sensing while deploying TOF sensors for blind-spot and proximity applications. This hybrid approach maximizes system capability while controlling costs through strategic sensor placement.

Manufacturers focused on cost-driven market segments should emphasize sophisticated TOF and camera fusion systems, investing heavily in software development and validation to extract maximum performance from lower-cost hardware configurations.

For System Engineers

Success lies in matching sensor capabilities to specific use cases rather than pursuing maximum technical specifications. A well-integrated, fault-tolerant system architecture that leverages the strengths of multiple sensor types will consistently outperform systems relying on single, high-specification sensors.

For Consumers

Vehicle buyers should avoid equating sensor quantity with autonomous capability. Instead, evaluate vehicles based on their comprehensive safety strategies, validation processes, and real-world performance rather than hardware specifications alone.

Conclusion: A Complementary Future

The future of automotive LiDAR will not be determined by the dominance of a single technology, but rather by the intelligent combination of complementary solutions. Long-range solid-state and MEMS LiDAR systems like RoboSense’s M1P will remain essential for high-speed autonomous driving scenarios, while TOF and short-range solutions will excel in proximity sensing and cost-sensitive applications.

This hybrid deployment strategy—leveraging long-range capabilities for critical forward-facing perception and short-range sensors for comprehensive 360-degree awareness—represents the most pragmatic path forward. As hardware costs continue declining and software capabilities advance, the integration of these complementary technologies will define the next generation of autonomous vehicle perception systems.

The mainstream solution will ultimately be characterized not by technological supremacy, but by strategic deployment that matches each sensor’s strengths to specific operational requirements while maintaining the safety, reliability, and cost-effectiveness necessary for broad market adoption.