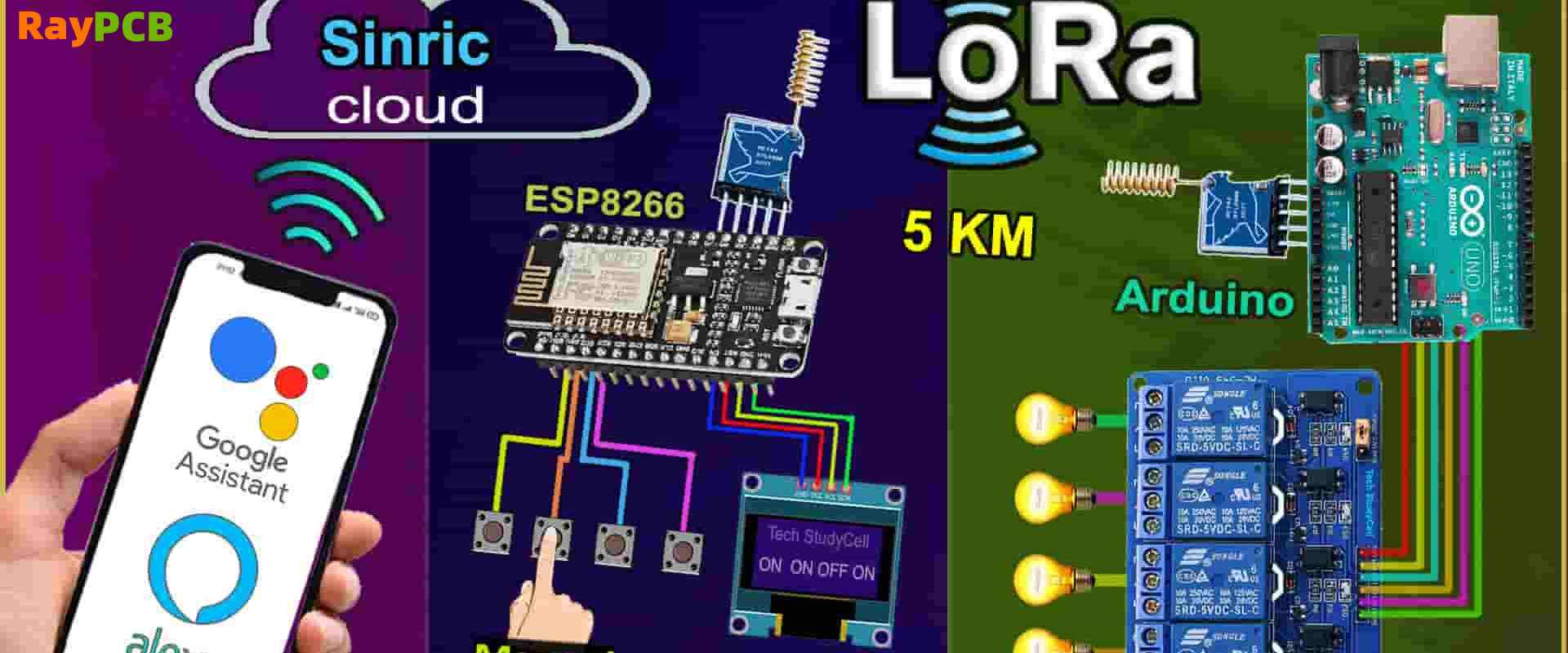

The Internet of Things (IoT) has revolutionized how we collect and transmit data from remote locations, but traditional Wi-Fi and cellular connections often fall short in terms of range and power consumption. LoRaWAN (Long Range Wide Area Network) technology addresses these limitations by providing long-range, low-power wireless communication perfect for IoT applications. When combined with the versatile ESP32 microcontroller, you can create powerful sensor nodes capable of transmitting data over several kilometers while maintaining excellent battery life.

This comprehensive guide will walk you through building a complete ESP32 LoRaWAN node using the familiar Arduino IDE environment, covering everything from hardware selection to network deployment.

Understanding LoRaWAN Technology

LoRaWAN operates in unlicensed ISM bands and uses a star-of-stars topology where end devices communicate with gateways, which then forward data to network servers. The technology offers three device classes: Class A (lowest power, bidirectional), Class B (scheduled downlinks), and Class C (continuously listening, highest power consumption). For most sensor applications, Class A provides the optimal balance of functionality and power efficiency.

The protocol supports adaptive data rate (ADR), which automatically optimizes transmission parameters based on network conditions, and provides built-in security through AES encryption at multiple layers. LoRaWAN networks can support thousands of devices per gateway, making them ideal for smart city deployments, agricultural monitoring, and industrial IoT applications.

Hardware Requirements and Selection

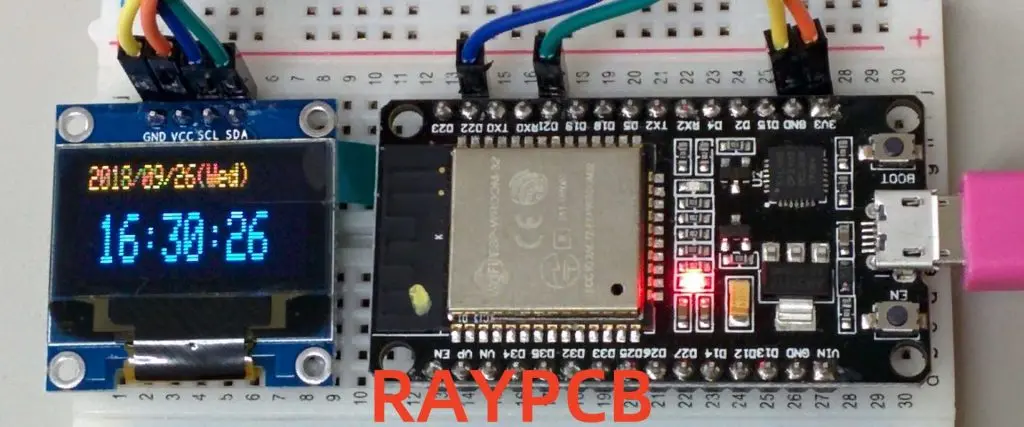

Building a robust ESP32 LoRaWAN node requires careful component selection. The ESP32 serves as your main microcontroller, offering built-in Wi-Fi and Bluetooth capabilities alongside GPIO pins for sensor interfacing. Choose an ESP32 development board with sufficient flash memory (at least 4MB) and adequate GPIO pins for your specific application requirements.

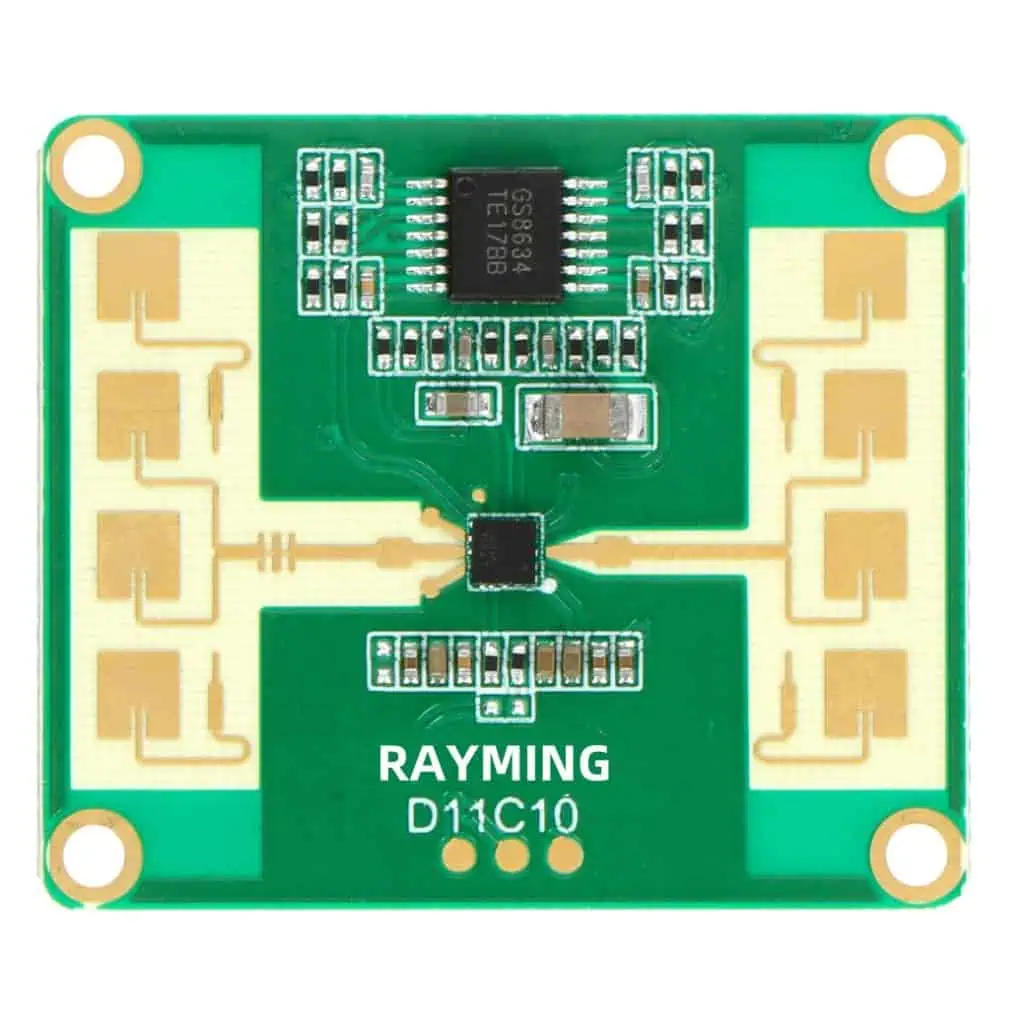

For LoRa communication, you’ll need a compatible radio module. The Semtech SX1276/SX1278 chips are widely supported and offer excellent performance in the 868MHz (Europe) or 915MHz (North America) bands. Popular options include the RFM95W module or integrated boards like the Heltec WiFi LoRa 32, which combines the ESP32 and LoRa radio on a single PCB.

An appropriate antenna is crucial for achieving maximum range. A simple wire antenna cut to quarter-wavelength (approximately 8.2cm for 868MHz or 7.8cm for 915MHz) works for testing, but consider a proper external antenna for production deployments. Spring antennas or small PCB antennas offer good performance in compact form factors.

Power management components are essential for battery-operated nodes. A low-dropout voltage regulator ensures stable 3.3V supply, while a battery monitoring circuit helps track remaining capacity. Consider adding a solar charging circuit for remote installations requiring long-term autonomous operation.

Setting Up the Arduino IDE Environment

Begin by installing the latest version of Arduino IDE and adding ESP32 board support. Navigate to File > Preferences and add the Espressif board manager URL: https://dl.espressif.com/dl/package_esp32_index.json. Then open Tools > Board > Boards Manager, search for “ESP32,” and install the ESP32 board package.

Next, install the required libraries for LoRaWAN communication. The MCCI LoRaWAN LMIC library provides comprehensive LoRaWAN stack implementation optimized for Arduino environments. Install it through Library Manager by searching for “MCCI LoRaWAN LMIC library.” This library handles all protocol complexities, including message encryption, frequency hopping, and duty cycle management.

You’ll also need supporting libraries depending on your sensors and requirements. Common additions include the Adafruit Sensor library for standardized sensor interfaces, ArduinoJson for data formatting, and specific libraries for sensors like BME280 (temperature, humidity, pressure) or GPS modules.

Configure your board settings in Arduino IDE by selecting your specific ESP32 variant under Tools > Board. Set the upload speed to 921600 for faster programming, and ensure the correct COM port is selected. If using a board with integrated LoRa radio, verify the pin definitions match your hardware configuration.

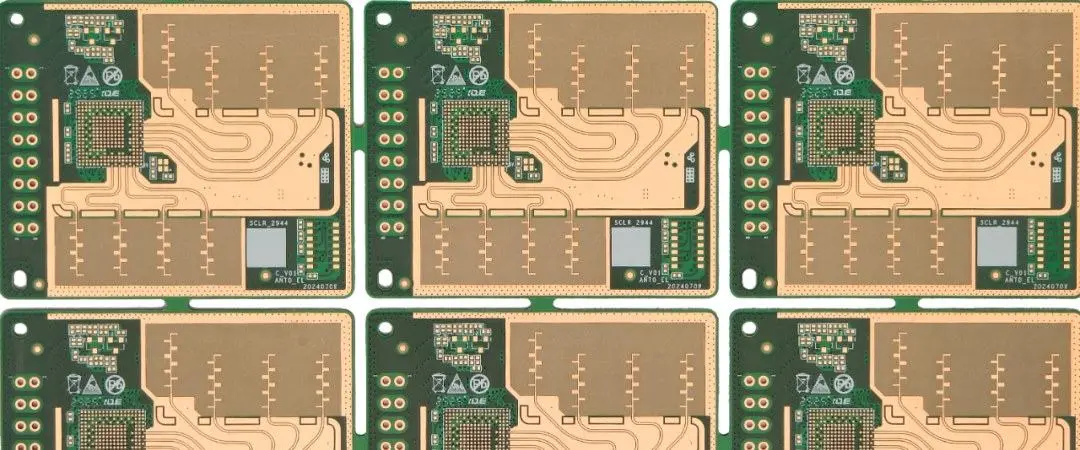

Wiring and Circuit Design

Proper wiring ensures reliable communication between the ESP32 and LoRa module. If using separate modules, connect the SX1276/1278 radio to the ESP32 via SPI interface. Typical connections include: SCK to GPIO5, MISO to GPIO19, MOSI to GPIO27, NSS (chip select) to GPIO18, DIO0 to GPIO26, DIO1 to GPIO33, and RST to GPIO14. Verify these pin assignments match your specific hardware configuration.

Power connections require careful attention to avoid noise and ensure stable operation. Connect both modules to a clean 3.3V supply with appropriate decoupling capacitors (100nF ceramic and 10µF electrolytic) placed close to power pins. Include a common ground connection and consider adding ferrite beads on power lines to reduce EMI.

For battery-powered applications, implement proper power management circuits. A voltage divider allows monitoring battery voltage through an analog input, while a MOSFET switch can control power to sensors and peripherals, enabling deep sleep functionality for maximum battery life.

Antenna placement significantly impacts performance. Keep the antenna away from other components and metal objects, use proper ground plane design, and ensure good impedance matching. Consider adding ESD protection components if the antenna is externally accessible.

Programming the Basic LoRaWAN Node

Start with a minimal working example that establishes LoRaWAN connectivity. Configure the LMIC library with your region-specific parameters, including frequency plan, duty cycle restrictions, and maximum transmission power. Initialize the radio with proper pin definitions and configure the device keys for Over-The-Air Activation (OTAA).

Your main loop should handle sensor readings, data formatting, and transmission scheduling. Implement proper timing to respect duty cycle limitations and avoid overwhelming the network. A typical structure includes sensor reading functions, data packaging routines, and transmission state management.

Here’s a basic framework for the main program structure:

cpp

#include <lmic.h>

#include <hal/hal.h>

#include <SPI.h>

// Pin mapping for ESP32

const lmic_pinmap lmic_pins = {

.nss = 18,

.rxtx = LMIC_UNUSED_PIN,

.rst = 14,

.dio = {26, 33, 32},

};

void setup() {

Serial.begin(115200);

SPI.begin();

// Initialize LoRaWAN

os_init();

LMIC_reset();

LMIC_setClockError(MAX_CLOCK_ERROR * 1 / 100);

// Start OTAA join procedure

LMIC_startJoining();

}

void loop() {

os_runloop_once();

// Handle sensor readings and transmission

if (shouldTransmit()) {

readSensors();

formatData();

scheduleTransmission();

}

// Implement power saving

enterSleep();

}Implement robust error handling for network join failures, transmission errors, and sensor malfunctions. Add debugging output to monitor join status, signal strength, and transmission confirmations during development.

Network Integration and Device Provisioning

Before your device can communicate, it must be registered with a LoRaWAN network server. For development and testing, The Things Network (TTN) provides free community network access. Create an account, register your application, and add your device with the appropriate keys.

Configure your device for OTAA, which provides better security than Activation By Personalization (ABP). Generate unique DevEUI, AppEUI, and AppKey values for each device. The DevEUI should be globally unique (many modules include a pre-programmed EUI), while AppEUI identifies your application and AppKey provides encryption.

Set up payload decoders on the network server to convert your binary data into human-readable formats. Create a decoder function that matches your data structure, enabling proper visualization and integration with external platforms. Consider using standardized payload formats like Cayenne LPP for simplified integration.

Configure downlink handling if your application requires remote control or configuration updates. Implement proper message parsing and response mechanisms while considering the limited downlink opportunities in Class A devices.

Advanced Features and Optimization

Implement adaptive data rate (ADR) functionality to optimize transmission parameters automatically. ADR adjusts spreading factor and transmission power based on network feedback, improving overall network efficiency and extending device battery life.

Add comprehensive sensor integration with proper calibration and error handling. Implement sensor fusion for applications requiring multiple measurements, and consider adding local data processing to reduce transmission frequency and payload size.

Develop robust power management strategies for battery-operated deployments. Implement deep sleep modes between transmissions, disable unnecessary peripherals, and use wake-up timers or external interrupts for event-driven operation. Monitor battery voltage and implement low-battery warnings or emergency shutdown procedures.

Consider implementing data compression and intelligent sampling strategies to maximize information density while minimizing airtime usage. Use techniques like delta encoding for slowly changing values or implement local thresholds to transmit only significant changes.

Troubleshooting and Best Practices

Common issues include join failures, typically caused by incorrect keys, frequency configuration, or poor radio reception. Verify all parameters match your network server configuration and ensure adequate antenna performance. Use debug output to monitor join attempts and response timing.

Range issues often stem from antenna problems, interference, or inappropriate transmission parameters. Test with line-of-sight conditions first, then gradually introduce obstacles while monitoring signal strength and packet success rates.

Power consumption higher than expected usually indicates improper sleep implementation or sensors remaining active during idle periods. Use current measurement tools to identify power-hungry components and verify sleep mode operation.

Implement proper firmware update mechanisms for deployed devices, considering the challenges of remote access and limited downlink capacity. Design your update process to handle interrupted transfers and provide rollback capabilities.

Conclusion and Future Development

Building an ESP32 LoRaWAN node opens possibilities for countless IoT applications, from environmental monitoring to asset tracking. The combination of ESP32’s processing power and LoRaWAN’s long-range capabilities creates a powerful platform for distributed sensing networks.

Future enhancements might include edge computing capabilities, machine learning inference for local data processing, or integration with other wireless protocols for hybrid connectivity solutions. As LoRaWAN networks continue expanding globally, your ESP32 nodes can participate in increasingly sophisticated IoT ecosystems.

The foundation you’ve built provides a starting point for more complex applications. Consider exploring advanced features like multicast communications, Class B scheduling, or custom payload encryption for specialized security requirements. With proper design and implementation, your ESP32 LoRaWAN nodes can operate reliably for years, providing valuable data for decision-making and automation systems.

Remember that successful IoT deployments require careful planning of network coverage, device management, and data integration. Start with small pilot projects to validate your approach before scaling to larger deployments, and always consider the long-term maintenance and support requirements of your IoT infrastructure.